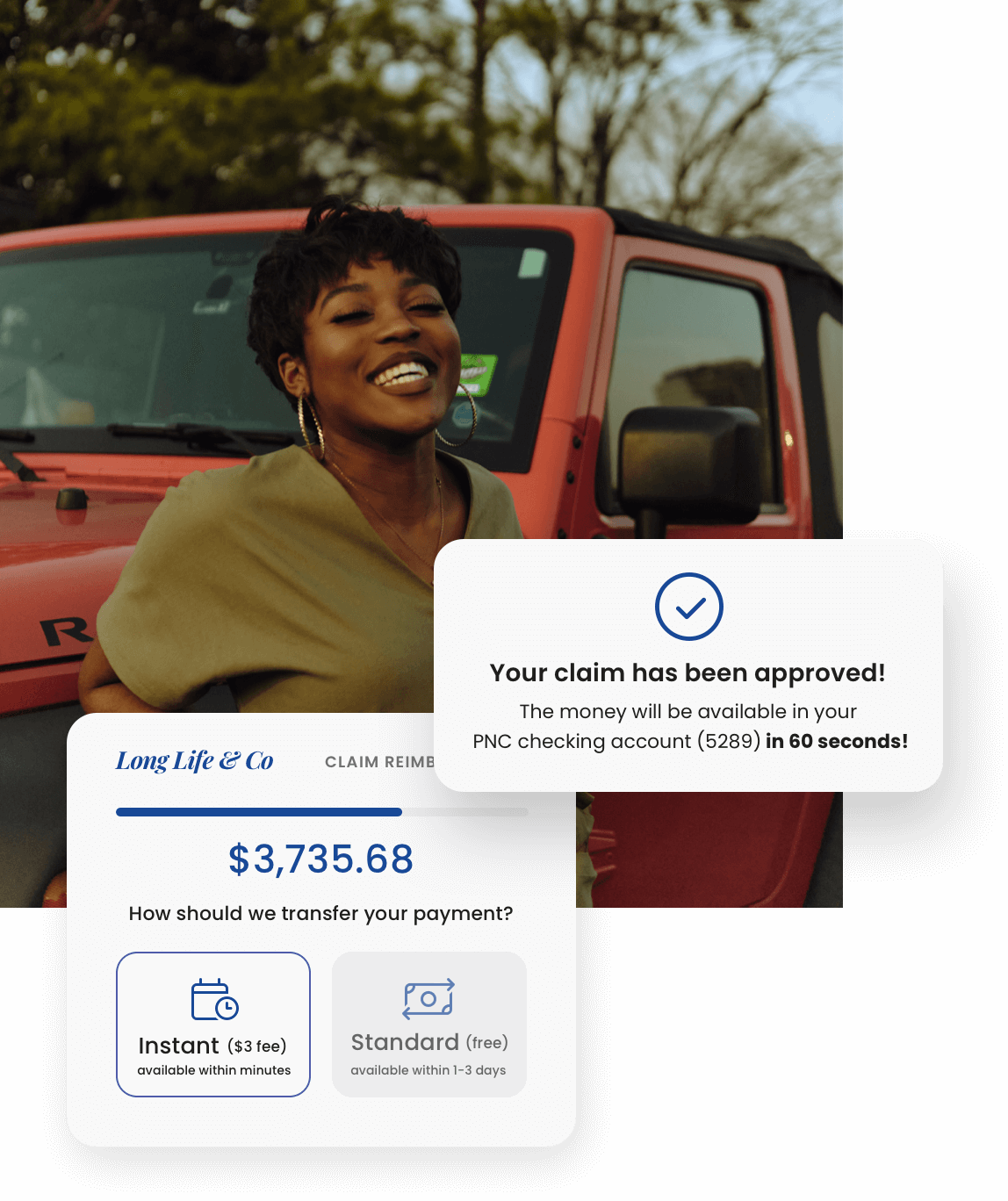

Revolutionize payment speed, certainty, and orchestration.

Fast, reliable payments. Instant bank account verification. Custom-built portal and ledger. Get set up on our modern payment solution in two weeks or less with informed support each step of the way.